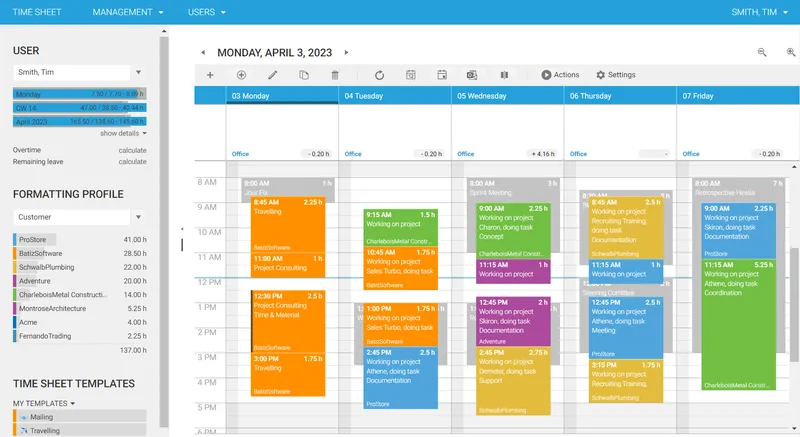

Project time tracking is crucial for monitoring project progress, costs, and profitability. It directly impacts your revenue. The intuitive and user-friendly calendar simplifies time tracking, allowing you and your team to focus on the actual work rather than time tracking.

One size does NOT fit all when it comes to time tracking! Recognizing the unique needs and processes of your business, time cockpit offers exceptional customization capabilities, allowing you to integrate it into your existing workflows. Schedule an appointment to learn more.

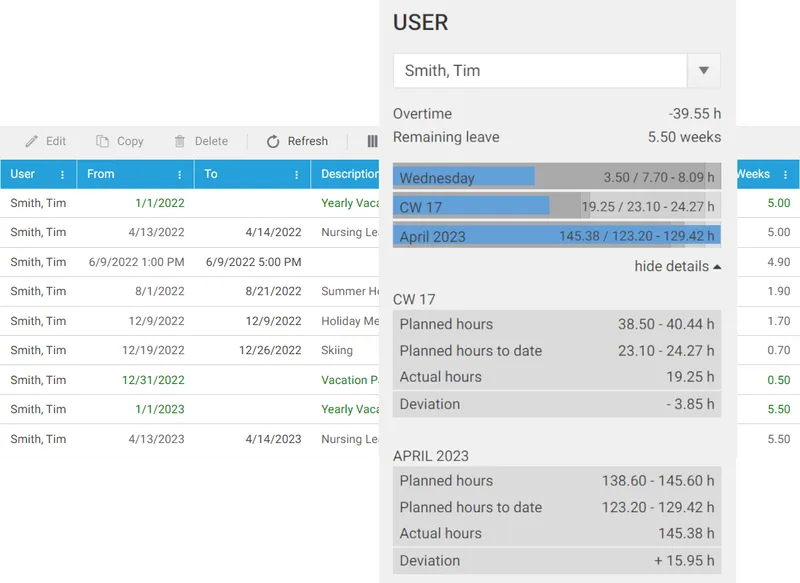

Time cockpit offers a unified solution that addresses both project time tracking and attendance time tracking. By consolidating these functionalities, users are relieved from the burden of maintaining separate time logs. Time cockpit provides features such as working time statistics, working time violations and absence time management, allowing organizations to effectively manage and monitor employee time-related data across different aspects of their work.

Companies require flexibility in their time tracking tools. At time cockpit, we understand this need for customization, and have built our platform from the ground up to be highly adaptable to individual requirements. You do not track your time on projects and tasks or need additional business logic? Take a look at our Made-to-Measure offer. We provide tailored time tracking that aligns with your specific needs. We help you to create the data structures and workflows that support the way you work.

If you have tech capabilities, we provide you with the tools and know-how to customize time cockpit yourself.

def approveVacation(actionContext):

dc = actionContext.DataContext

if dc.InputSet is not None:

for v in dc.InputSet:

v.USR_ApprovedTimestamp = DateTime.UtcNow

v.USR_RequestForApprovalSentTimestamp = v.USR_ApprovedTimestamp

v.USR_ApprovedBy = dc.Environment.CurrentUser.Username

dc.SaveObject(v)