Time cockpit is a cloud-based time tracking software specifically designed for service companies. It enables work hour tracking, project time tracking, and attendance management in one centralized platform.

With the Activity Tracker, time cockpit supports automatic activity logging to avoid missing hours. The solution is highly flexible and can be tailored to meet the specific needs of your business – from customized work time models to a complete made-to-measure solution.

The cloud-based project time tracking in time cockpit is designed to give you full control over time and budget. Manage tasks, define hourly rates for customers, projects, and activities, and automate invoicing through transparent billing.

Thanks to flexible customization and seamless integration with tools like Jira, Azure DevOps, and Microsoft Dynamics, you can manage projects efficiently and profitably. Overtime, absences, and work time statistics are automatically recorded, enabling precise planning and billing.

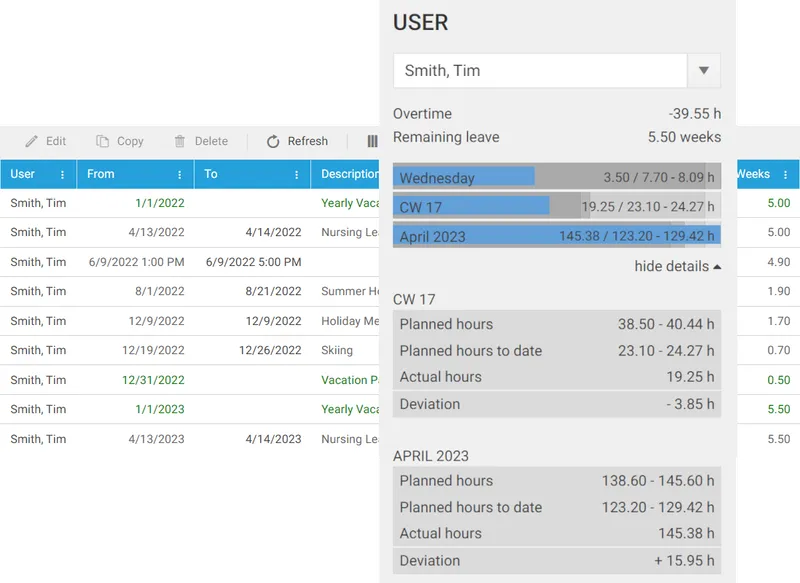

Time cockpit makes digital work time tracking a breeze. Our system provides precise documentation that also meets complex requirements such as flexible work schedules or remote work.

With time cockpit, you can manage vacation, absences, and sick leaves centrally and accurately, including automatic calculations of remaining vacation days and time balances. Overtime and breaks are also taken into account, ensuring a correct and complete time tracking system.

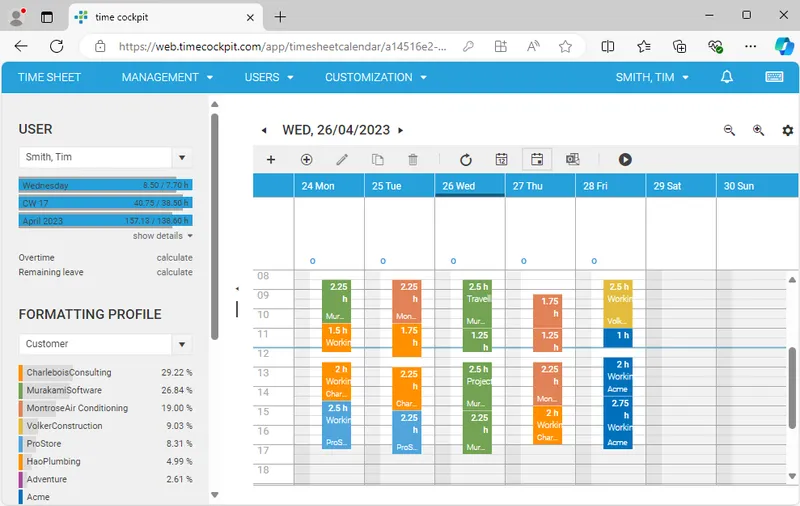

Time cockpit is browser-based and works seamlessly on PC, Mac, and mobile devices – ready to use anytime and anywhere.

The integrated Activity Tracker automatically captures your used applications, edited files, and activities. This data helps you document your work time efficiently – whether you are in the office, working from home, or on the go.

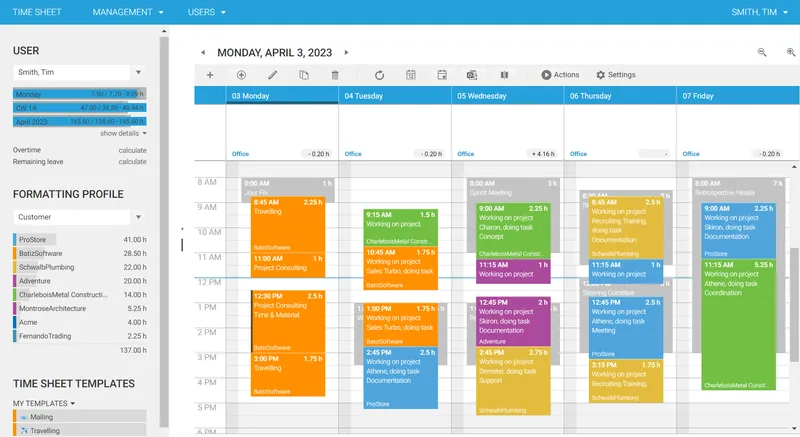

The graphic calendar is your central hub for digital time tracking. Switch easily between day, week, and month views and gain a clear overview of your time entries, work time statistics, absences, and home office days.

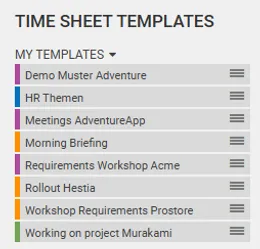

Time cockpit provides seamless Office 365 integration, allowing you to convert your Outlook calendar appointments into time entries with just a double-click. The intuitive drag-and-drop function makes it especially easy to move, copy, or edit entries. With format templates and recurring entry templates, you save additional time and ensure comprehensive time tracking.

Recurring tasks such as administrative duties or team meetings can be recorded even faster using recurring entry templates. Create templates for these entries and drag them directly into the graphic calendar. This feature not only saves time but also ensures that all tasks are fully documented.

Time cockpit offers a powerful Web API that allows easy integration into existing systems. With support for REST, JSON, and HTTP, you can seamlessly connect your time tracking software with tools like Jira, Azure DevOps, SAP, and Microsoft Dynamics. Automate processes and optimize your IT landscape to enhance efficiency and productivity.

Time cockpit is built on an ISO 27001-certified infrastructure that meets the highest security standards. Your data is always protected through automatic backups and disaster recovery. The solution is scalable and suitable for small teams and large organizations looking for a flexible and audit-proof time tracking system.

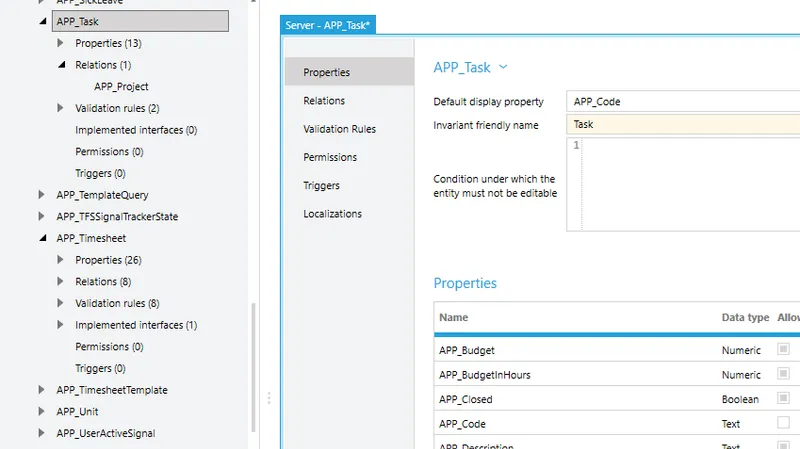

Businesses need flexible time tracking. With time cockpit, you get a highly customizable time tracking system. Add data models, reports, and fields without programming knowledge. Use IronPython to create complex business logic tailored to your needs. Thanks to multilingual support, it’s easy to use in international teams.

With our Made-to-Measure offer, we provide the opportunity to adapt your time tracking to your specific needs. Schedule a meeting to learn more.

Do you have your own technical expertise? We’re happy to help you customize time cockpit yourself.